OpenAI's Whisper Large-v3 Turbo cuts the decoder from 32 layers to 4, dropping parameters from 1.55B to 809M. The result: 2–5× faster transcription with near-identical accuracy. Whisper Notes ships it on Mac with Apple Silicon.

V3 Turbo vs V3: What Changed

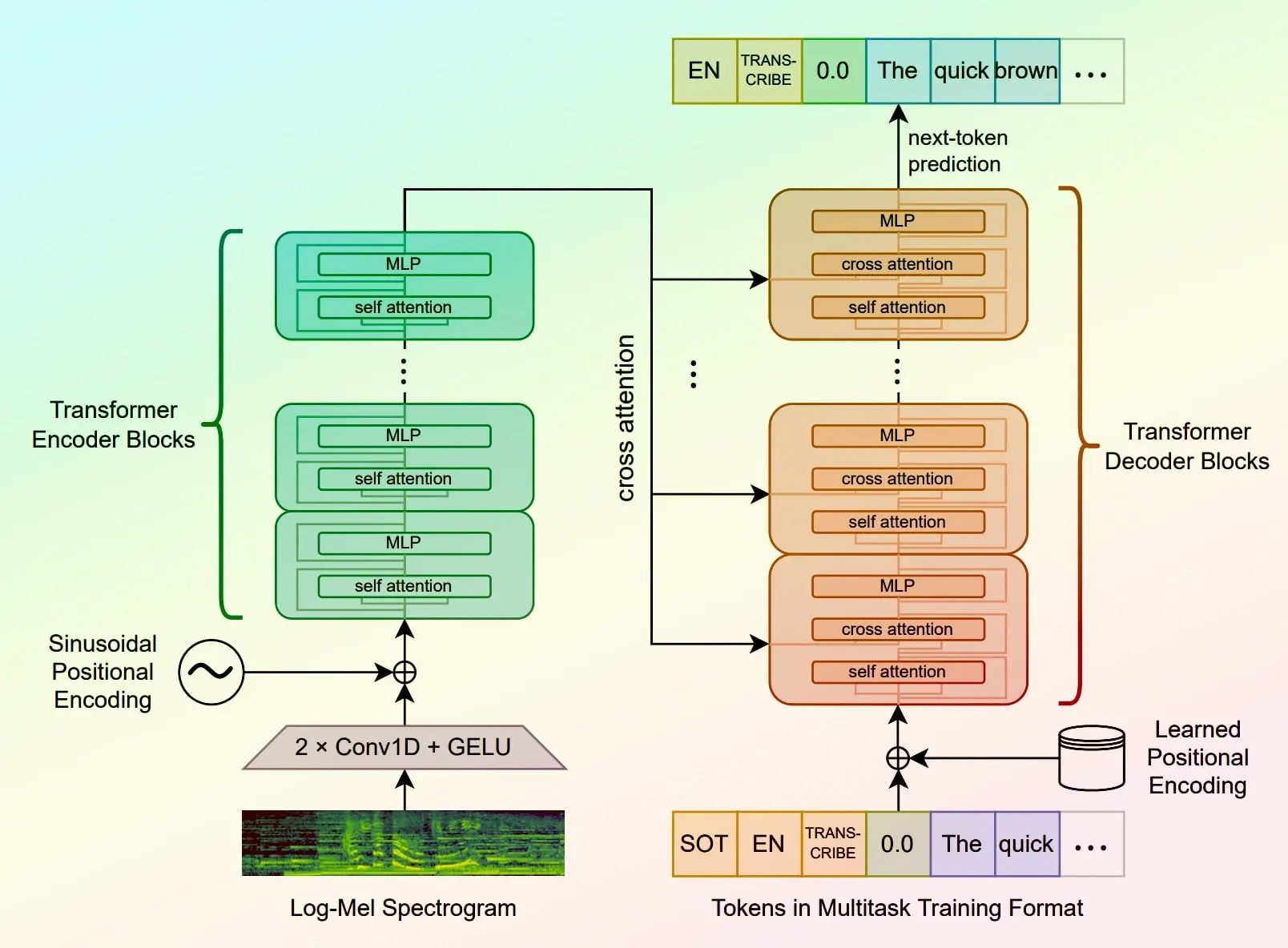

Turbo is not a new architecture. It's the exact same Whisper Large-v3 model with the decoder pruned from 32 layers to 4, then fine-tuned to recover accuracy. The encoder is untouched.

| Large-v3 Turbo | Large-v3 | |

|---|---|---|

| Parameters | 809M | 1,550M |

| Decoder layers | 4 | 32 |

| Languages | 99 | 99 |

| Translation task | Not supported | Supported |

| License | MIT | Apache 2.0 |

The translation task was explicitly excluded from Turbo's training data. The full Large-v3 model supports it, but Whisper Notes ships Turbo only — translation is handled separately via Apple Intelligence.

Speed Benchmark: Whisper Notes on Apple Silicon

In Whisper Notes for Mac, Turbo runs via CoreML on the Neural Engine. Processing 10 minutes of audio:

| Device | Whisper V3 | V3 Turbo | Speedup |

|---|---|---|---|

| iPhone 15 Pro | 425 s | 82 s | 5.2× |

| iPad Pro M2 | 380 s | 71 s | 5.4× |

| MacBook Pro M2 | 316 s | 63 s | 5.0× |

The 5× speedup is specific to Whisper Notes on Apple Silicon, where the smaller decoder benefits from Neural Engine optimization. On GPU with frameworks like faster-whisper, the gap narrows to ~2.7× (see community benchmarks below).

Accuracy: WER Comparison

The Hugging Face Open ASR Leaderboard tests both models on the same English datasets. Turbo's word error rate is within half a point of V3 across every benchmark:

| Dataset | V3 Turbo WER | V3 WER |

|---|---|---|

| LibriSpeech Clean | 2.10% | 2.01% |

| LibriSpeech Other | 4.24% | 3.91% |

| GigaSpeech | 10.14% | 10.02% |

| Earnings22 | 11.63% | 11.29% |

| AMI | 16.13% | 15.95% |

| Mean WER | 7.83% | 7.44% |

V3 is slightly more accurate on every dataset, but the gap is small — 0.39 percentage points on average. For most real-world transcription, you won't hear the difference.

On the YouTube-commons long-form evaluation (one of the largest open-source ASR benchmarks), Turbo scores 13.40% WER vs V3's 13.20% — while running at 129.5× real-time factor vs 55.3×. That's 2.3× faster with nearly identical accuracy on real-world audio.

Community Benchmarks: GPU & CPU

Independent benchmarks from the faster-whisper and whisper.cpp communities show consistent results across hardware. Transcribing 13 minutes of audio with faster-whisper on GPU:

| Model | Precision | Time | GPU Memory | WER |

|---|---|---|---|---|

| Large-v3 Turbo | fp16 | 19.2 s | 2,537 MB | 1.92% |

| Large-v3 | fp16 | 52.0 s | 4,521 MB | 2.88% |

| Large-v3 Turbo | int8 | 19.6 s | 1,545 MB | 1.92% |

| Distil-Large-v3 | fp16 | 26.1 s | 2,409 MB | 2.39% |

Source: faster-whisper benchmark on NVIDIA GPU, LibriSpeech clean validation split. Turbo int8 uses only 1.5 GB VRAM — it fits on a 2 GB GPU.

Batched inference on an RTX 3060 Laptop (6 GB VRAM, int8 precision) pushes the advantage further:

| Model | Sequential | Batched (10) | Batched WER |

|---|---|---|---|

| Large-v3 Turbo | 46.1 s | 18.7 s | 7.7% |

| Large-v3 | 230.8 s | 43.0 s | 7.9% |

| Large-v2 | 178.3 s | 43.2 s | 8.8% |

| Medium | 113.3 s | 26.3 s | 8.9% |

Source: NilaierMusic benchmark, Intel i7-12650H + RTX 3060 Laptop 6 GB, French audio, int8 precision.

With batched processing, Turbo achieves the best WER of any model tested (7.7%) while being the fastest. It's the clear sweet spot for production use.

Known Limitations (and How Whisper Notes Handles Them)

No built-in translation

Turbo was trained without translation data. It transcribes in the source language only — unlike Large-v3, which supports audio→English translation.

Whisper Notes — Apple Intelligence auto-translates transcripts into your chosen language, giving you bilingual output regardless of which model you use.

More hallucination on noisy audio

Community reports indicate Turbo hallucinates more on very short clips or noisy recordings vs V3. Expected given the reduced decoder (4 layers vs 32).

Whisper Notes — runs Pyannote VAD before transcription, detecting speech segments and stripping silence/noise so the model only processes real voice.

Which Model Should You Use?

| English / European | Parakeet V3 — 10× faster than Whisper, better accuracy |

| Chinese / Japanese / Korean | SenseVoice — purpose-built for CJK, 52× speed |

| Other languages | Whisper Large V3 Turbo — 99 languages, high accuracy, slower |