We built offline meeting transcription for Mac. It records Zoom, Teams, and Google Meet calls, transcribes them locally with Parakeet V3, and summarizes them with Gemma 4. No cloud, no bot in the call. $6.99 once.

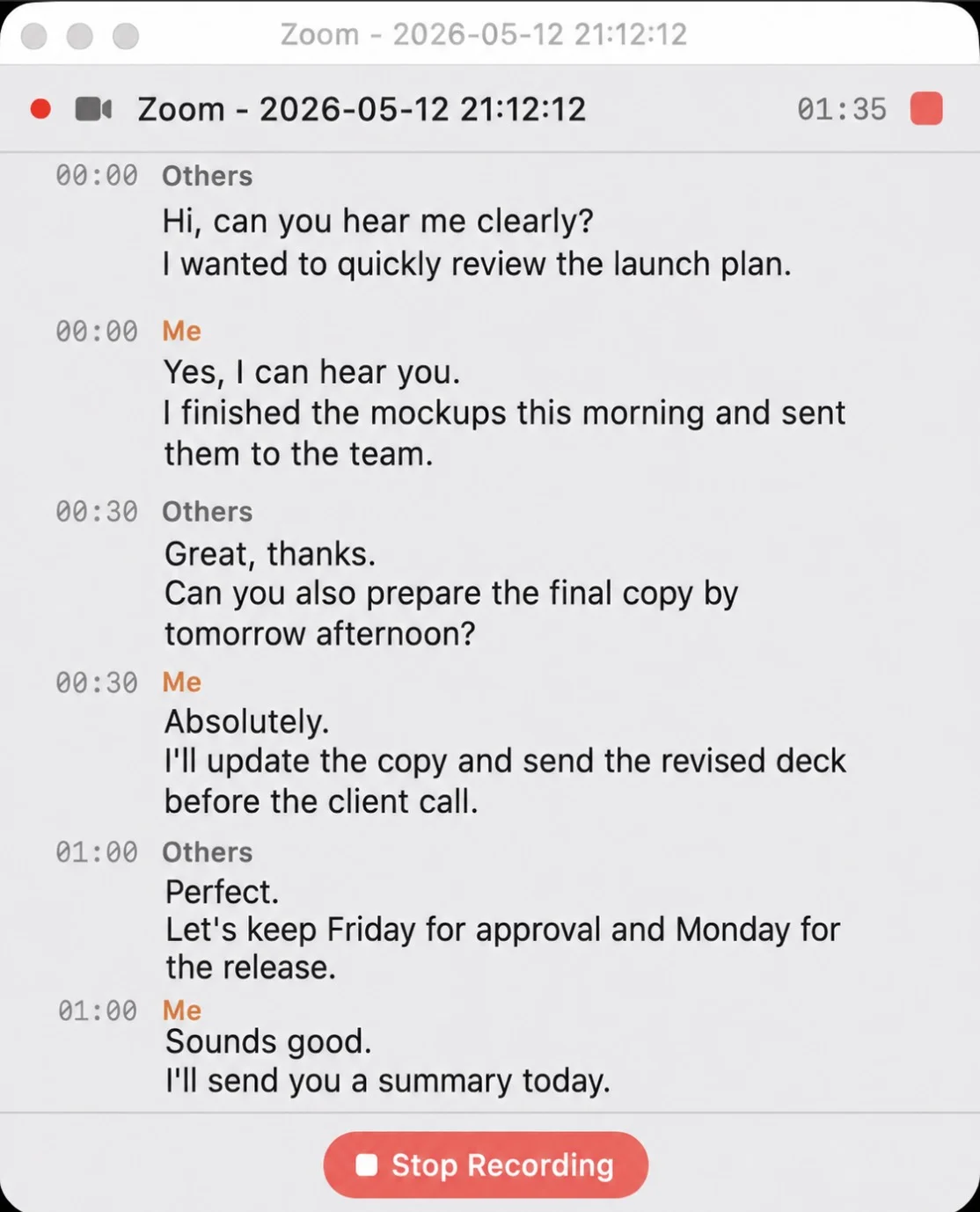

Recording a Zoom call in Whisper Notes — "Me" and "Others" are labeled by audio source

A Typical Monday

10 AM, Zoom call with a client. You open Whisper Notes, click record. The app captures system audio and your microphone simultaneously — nobody in the meeting sees a bot, nobody gets a notification, nothing shows up in the participant list.

An hour later, the call ends. You stop recording. Parakeet V3 transcribes 60 minutes of audio in about a minute, entirely on your Mac's Neural Engine. You tap Summarize — Gemma 4 extracts the key points. You tap Action Items — it pulls out every task and deadline mentioned. You send the meeting notes to the client. The audio never left your machine.

That's the whole workflow. Record, transcribe, summarize. All local.

What It Does

Recording

Whisper Notes captures system audio — the sound coming out of your speakers or headphones. If you can hear it on your Mac, we can transcribe it. Zoom, Teams, Google Meet, Webex, GoTo, Whereby, Jitsi, YouTube, podcasts, or any other app. It also records your microphone at the same time, so both sides of the conversation are captured.

No bot joins the call. This matters more than it sounds. If you've ever seen "Otter.ai Notetaker has joined the meeting" pop up in a Zoom call, you know what happens next — someone asks what it is, someone else gets uncomfortable, and the conversation shifts. With system audio capture, nobody knows you're recording except you.

Transcription

Parakeet V3 runs on Apple Silicon via CoreML. It processes English and 24 European languages at roughly 60× real-time — a 60-minute meeting finishes in about a minute. For Chinese, Japanese, or Korean, SenseVoice handles CJK at 52× speed. Pyannote VAD strips silence before transcription, so the model only processes actual speech.

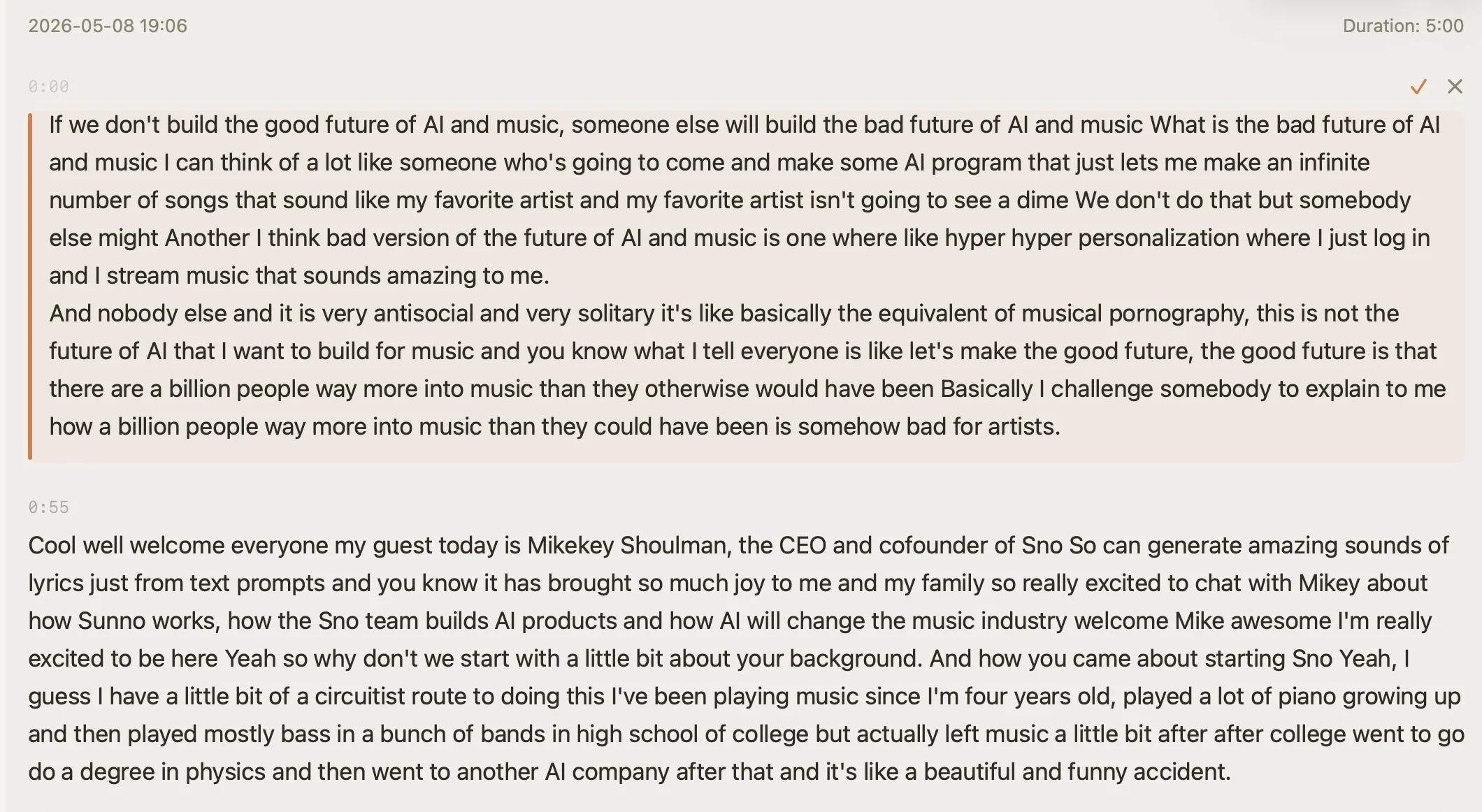

Transcript with timestamps and inline editing — click any segment to jump to that moment in the audio

AI Features — All Local

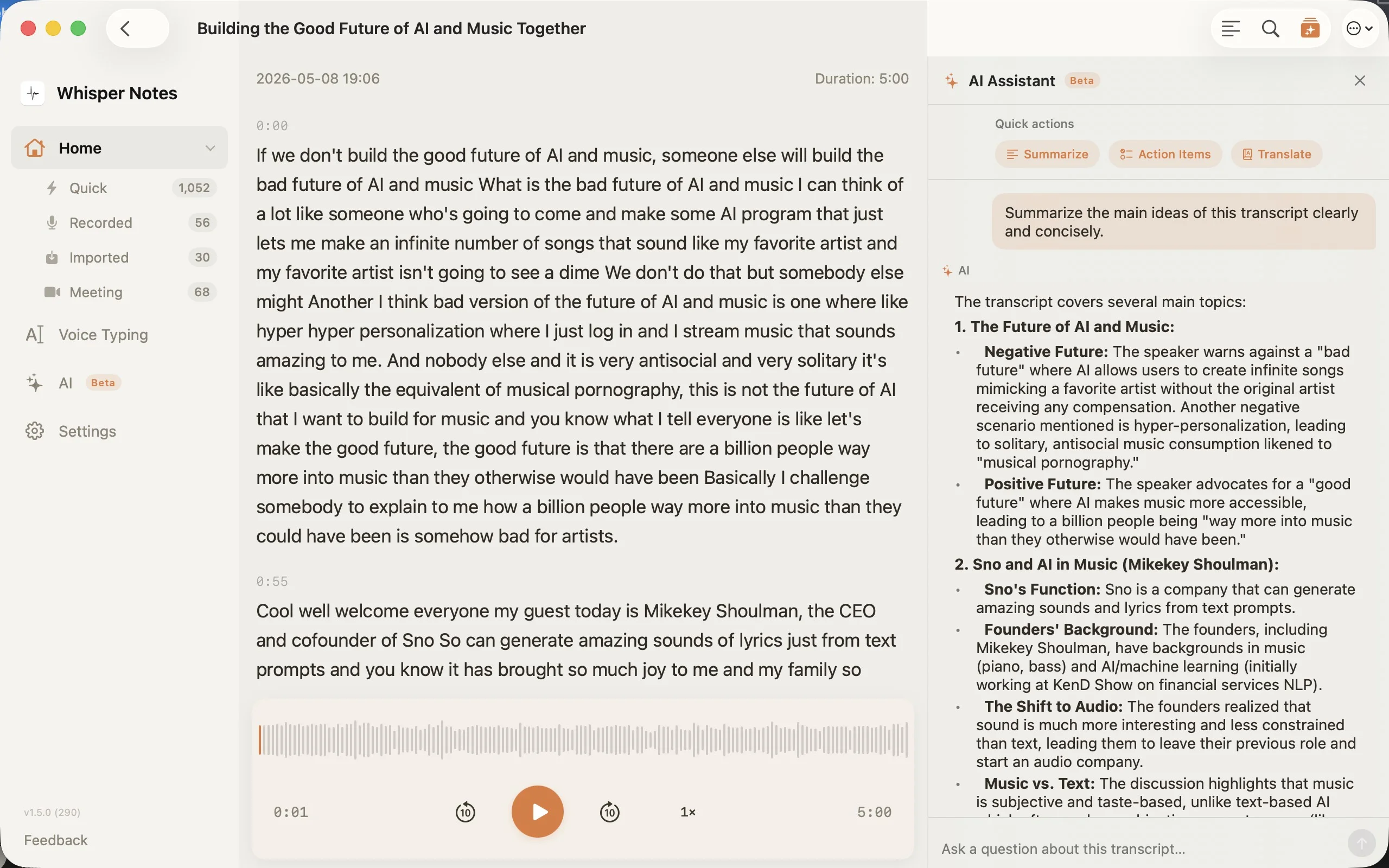

Gemma 4 runs on your Mac. No API key, no cloud call, no usage limits. After transcription:

- •Summarize — main points of a 60-minute meeting, in seconds

- •Action Items — tasks and deadlines, extracted automatically

- •Translate — Apple Intelligence translates the transcript into another language

- •Chat — ask "what did we agree on pricing?" and get an answer grounded in the transcript

Gemma 4 AI sidebar — Summarize, Action Items, Translate, and free-form chat, all running locally

Why We Built It This Way

Meeting audio is some of the most sensitive data a company produces. Client negotiations, HR reviews, board discussions, legal consultations — the kind of conversations where the wrong leak ends careers.

Most transcription tools upload this audio to cloud servers, process it there, and store it under their data retention policies. Some add a bot to the call that everyone can see. Some keep your recordings indefinitely for "model improvement."

We took a different approach: everything runs on your Mac. The ASR model, the LLM, the audio storage — all local. There's no server to breach, no data retention policy to read, no third-party subpoena risk. For teams under GDPR, HIPAA, or attorney-client privilege, this architecture is the point.

How It Compares

| Whisper Notes | Otter.ai | Fireflies | Jamie | |

|---|---|---|---|---|

| Processing | 100% on-device | Cloud | Cloud | Hybrid |

| Bot in call | No | Yes | Yes | No |

| Price | $6.99 once | $16.99/mo (Pro) | from $18/mo | $24/mo |

| Works offline | Yes | No | No | Partial |

| AI summary | Local (Gemma 4) | Cloud | Cloud | Cloud |

| Speaker diarization | Not yet | Yes | Yes | Yes |

Different Meetings, Different Languages

Pick the model that matches your meeting language:

| English / European | Parakeet V3 — ~60× real-time, 6.32% WER, zero hallucinations on silence |

| Chinese / Japanese / Korean | SenseVoice — 52× speed, handles Cantonese, GPU-accelerated via MLX |

| Other languages | Whisper Large V3 Turbo — 99 languages, high accuracy, slower |

What's Missing

We don't have speaker diarization yet. Right now, Whisper Notes labels audio as "Me" (your microphone) and "Others" (system audio) — which covers most one-on-one and small group meetings. But for a 10-person call where you need to know who said what, that's not enough.

It's the obvious next step and we're working on it. The goal is local diarization that runs alongside Parakeet V3 and SenseVoice, without sending audio anywhere.